I’m trying to build a lightweight inference engine based on the text version of the model (exported using dump_model()), that features the different trees and their leaf values.

# Structure of the model exported suing dump_model()

booster[0]:

0:[f53<0.5] yes=1,no=2,missing=1

1:[f11<7.5] yes=3,no=4,missing=3

3:[f8<1.62607923e+09] yes=7,no=8,missing=7

7:[f62<0.985777259] yes=15,no=16,missing=15

...

booster[1]:

...

booster[2]:

...

.

.

booster[99]:

...

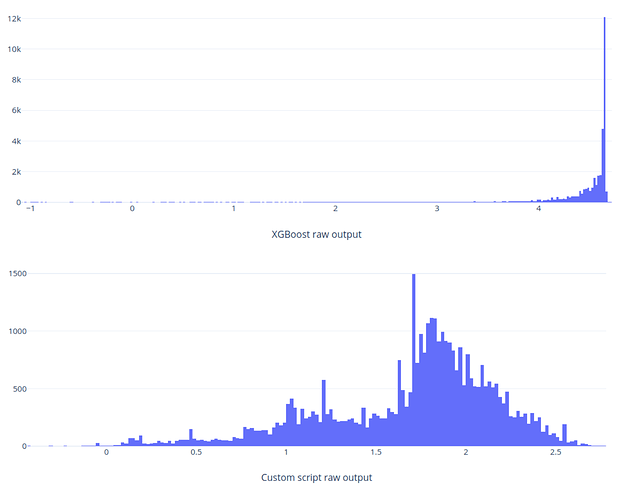

I’m computing the output of each tree, and adding the corresponding leaf values (before passing the result through a logistic transformation to match the way a ‘binary:logistic’ model outputs the probabilities).

But for now, I can’t get the outputs to match with XGBoost ones.

So: How does the predict function of XGBoost work? Is there any computing step I’m missing?

Huge thanks!