I tried to reproduce the regression as illustrated in the Monotonic constraints tutorial :

https://xgboost.readthedocs.io/en/latest/tutorials/monotonic.html

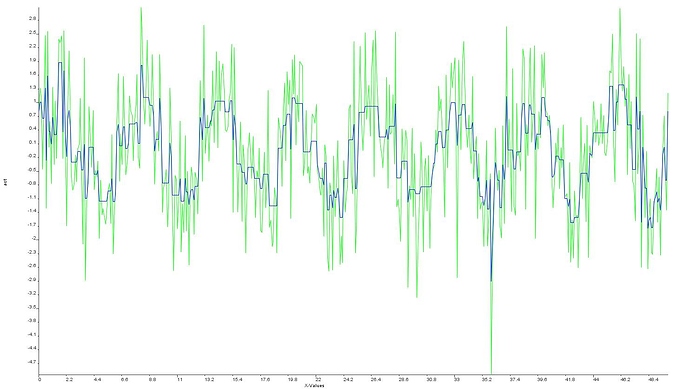

I generate points with a linear trend + sine wave + random noise, then randomize them, and use XGBoost.train() on a subsample. Then, I used the trained booster to predict points in the remaining test sample.

The issue is that I obtain a constant predicted value for all points in the test subsample, not a nice trend + sine wave as in the tutorial.

The params I use are :

params.put(“eta”,0.1 );

params.put(“max_depth”,3 );

params.put(“silent”, 0 );

params.put(“objective”, “reg:linear”);

Would it be possible to obtain the code used to generate the charts shown in the tutorial ? (parameters used + training + testing set)?

Thanks !